Step-1: Gradient Descent starts with a random solution,

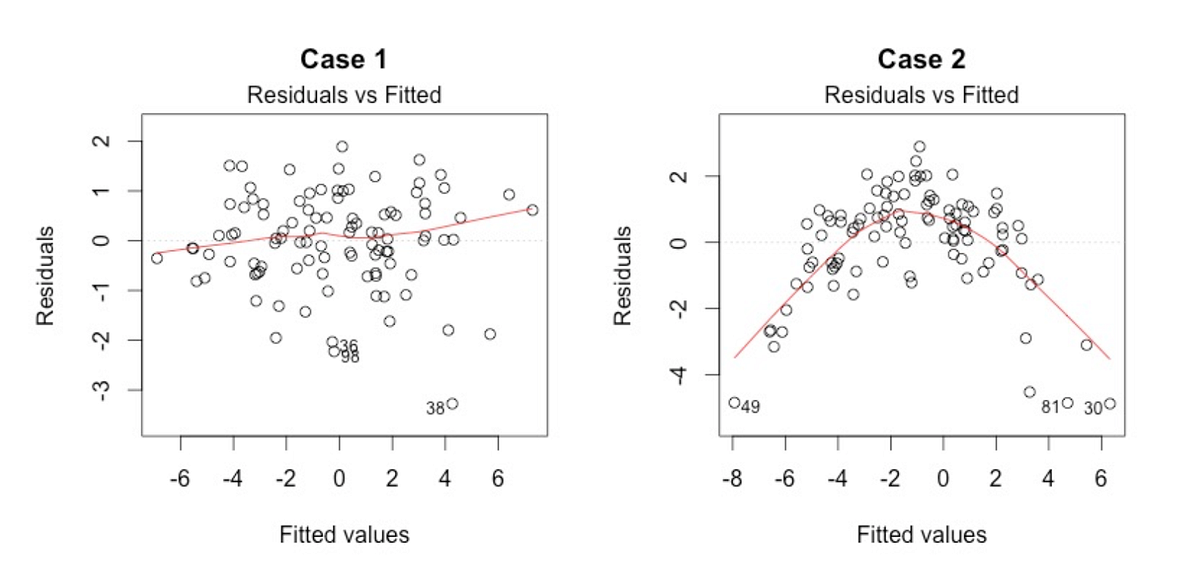

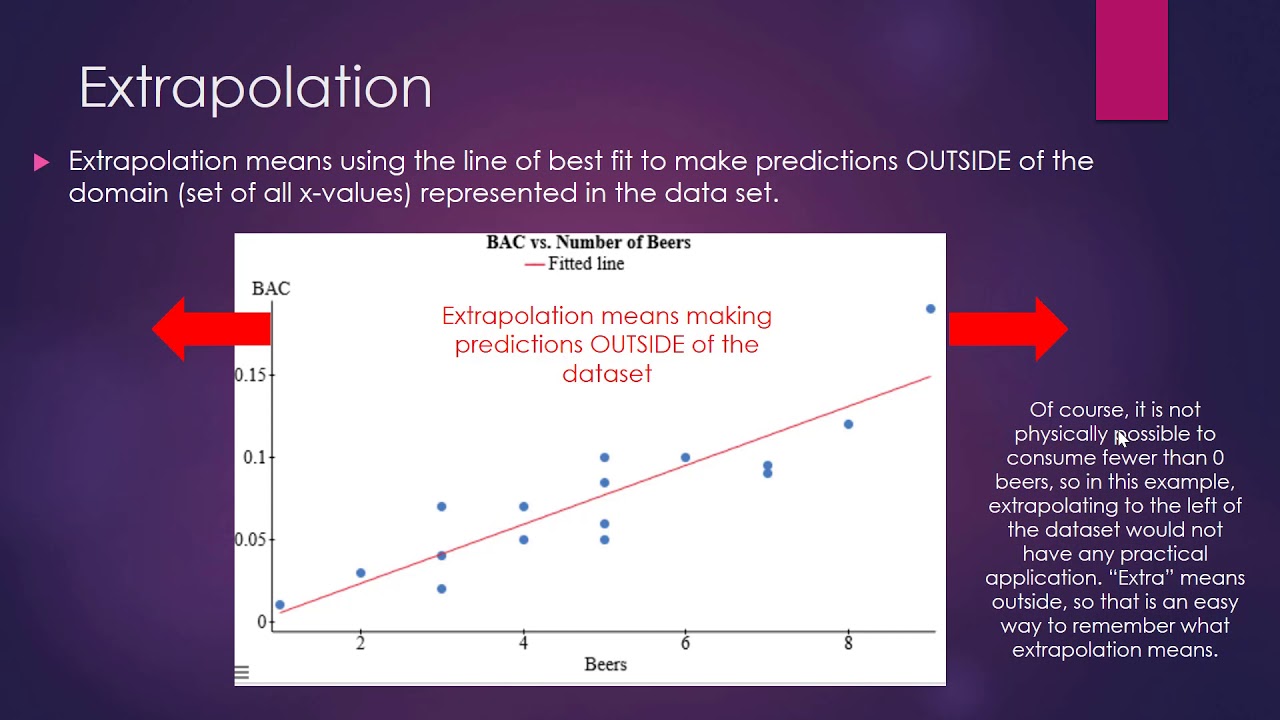

Y is the target column or output, and m is the number of data points in the training set. Here, h is the linear hypothesis model, defined as h=θ 0 + θ 1x, Mathematically, the main objective of the gradient descent for linear regression is to find the solution of the following expression,ĪrgMin J(θ 0, θ 1), where J(θ 0, θ 1) represents the cost function of the linear regression. In that case, the ball moves along the direction of the maximum gradient and comes to rest at the flat surface i.e, corresponds to minima. In linear regression, this algorithm is used to optimize the cost function to find the values of the β s (estimators) corresponding to the optimized value of the cost function.The working of Gradient descent is similar to a ball that rolls down a graph (ignoring the inertia). Gradient descent is a first-order optimization algorithm. Explain the difference between Correlation and Regression. there is no multicollinearity in the data.Ĥ. There does not exist a linear dependency between the independent variables, i.e.The independent variables are measured without error.their pair-wise covariance value is zero. Independent error assumption: The residual terms are independent of each other, i.e.This assumption is also called the assumption of homogeneity or homoscedasticity. Constant variance assumption: The residual terms have the same (but unknown) value of variance, σ 2.Zero mean assumption: The residuals have a mean value of zero.Normality assumption: The error terms, ε(i), are normally distributed.Sometimes, this assumption is known as the ‘linearity assumption’. It is assumed that there exists a linear relationship between the dependent and the independent variables. Now, let’s break these assumptions into different categories: Assumptions about the form of the model: Normality: The error(residuals) follows a normal distribution.

Independence: Observations are independent of each other.Multicollinearity: There is no multicollinearity between the features.Homoscedasticity: The error term has a constant variance.Linearity: The relationship between the features and target.The basic assumptions of the Linear regression algorithm are as follows: What are the basic assumptions of the Linear Regression Algorithm? Therefore, a linear regression model is quite easy to interpret.įor Example, if we increase the value of x 1 increases by 1 unit, keeping other variables constant, then the total increase in the value of y will be β i and the intercept term (β 0) is the response when all the predictor’s terms are set to zero or not considered. The significance of the linear regression model lies in the fact that we can easily interpret and understand the marginal changes in the independent variables(predictors) and observed their consequences on the dependent variable(response). How do you interpret a linear regression model?Īs we know that the linear regression model is of the form:

It is mostly done with the help of the Sum of Squared Residuals Method, known as the Ordinary least squares (OLS) method. In technical terms: It is a supervised machine learning algorithm that finds the best linear-fit relationship on the given dataset, between independent and dependent variables. tries to find the best linear relationship between the independent and dependent variables. In simple terms: It is a method of finding the best straight line fitting to the given dataset, i.e. In this article, we will discuss the most important questions on the Linear Regression Algorithm which is helpful to get you a clear understanding of the Algorithm, and also for Data Science Interviews, which covers its very fundamental level to complex concepts. Therefore it becomes necessary for every aspiring Data Scientist and Machine Learning Engineer to have a good knowledge of the Linear Regression Algorithm. It is a linear approach to modeling the relationship between a scalar response and one or more explanatory variables. Linear Regression, a supervised technique is one of the simplest Machine Learning algorithms. This article was published as a part of the Data Science Blogathon Introduction

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed